A

(Brief)

History

of AI_

From Alan Turing's imitation game to autonomous agents — eight decades of machines learning to think, fail, and think again. A structured overview of artificial intelligence's defining moments.

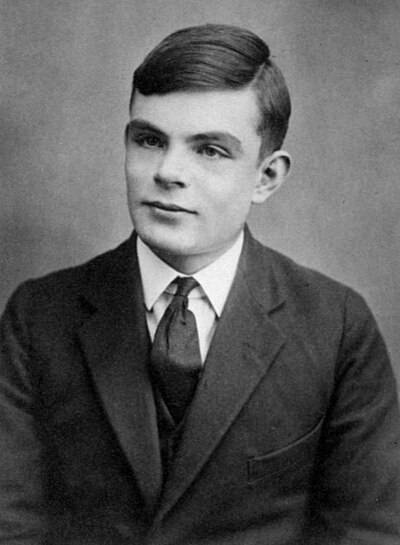

The Turing Test

Alan Turing asks "Can Machines Think?" He proposes the Imitation Game — if a machine can fool a human, perhaps it thinks. The single most influential question in AI history.

Dartmouth Conference

McCarthy, Minsky, Shannon & friends coin "Artificial Intelligence" at a summer workshop. The field is officially named. They estimate they'll crack it in one summer. Reader: they did not.

The Perceptron

Frank Rosenblatt builds the first neural network hardware. The New York Times declares it will "walk, talk, see, write, reproduce itself and be conscious." Expectations were calibrated differently then.

ELIZA — First Chatbot

Weizenbaum creates ELIZA at MIT — a program mimicking a therapist. Users form emotional attachments. Weizenbaum is horrified by their credulity. The AI therapist debate begins 60 years early.

is Named.

First AI Winter

Minsky & Papert demonstrate perceptrons cannot learn XOR. Funding collapses. The UK Lighthill Report calls AI "disappointing." DARPA withdraws. The field enters hibernation.

Expert Systems Boom

AI rebounds via rule-based Expert Systems encoding human domain knowledge. XCON saves DEC $40M per year. Lisp machines proliferate. Then the rules become impossible to maintain at scale.

Second AI Winter

Expert systems fail to scale. Lisp machine market implodes. DARPA cuts funding again. Neural networks appear dead. The field shrinks to academic enclaves. Second full collapse in 20 years.

Deep Blue Defeats Kasparov

IBM's Deep Blue defeats world chess champion Kasparov 3.5–2.5. Kasparov alleges computational cheating. IBM retires Deep Blue immediately. The optics remain unresolved to this day.

We are on the edge of change comparable to the rise of human life on Earth.

Hinton's Breakthrough

Geoffrey Hinton publishes "Learning Multiple Layers of Representation." Deep neural networks actually work. The AI community largely ignores it. The slow fuse is lit.

AlexNet — ImageNet

AlexNet surpasses ImageNet benchmarks by over 10 points. GPU acceleration meets deep learning. Every major lab pivots overnight. The modern era begins here.

GANs — Generative AI Born

Goodfellow invents GANs at 2am after a debate. Two competing networks fight each other. Generative AI is born. Creative industries begin a long, complicated reckoning.

AlphaGo — Go Defeated

DeepMind's AlphaGo defeats Lee Sedol 4-1 at Go — more board states than atoms in the observable universe. Sedol retires in 2019, citing the machine as undefeatable.

All You Need.

The Transformer Paper

Google Brain publishes "Attention Is All You Need" — eight authors, one paper. It replaces recurrent networks entirely. Every modern foundation model runs on this architecture.

BERT & GPT-1

Google releases BERT; OpenAI ships GPT-1. Language models demonstrate genuine text comprehension. The scaling arms race begins — every point of compute translates to capability.

GPT-3 — 175B Parameters

OpenAI scales to 175 billion parameters. GPT-3 writes essays, passes professional exams, generates working code. API waitlist exceeds one million developers. Microsoft invests $1 billion.

Image Generation Emerges

DALL·E, Midjourney, and Stable Diffusion make text-to-image accessible at consumer scale. Generative media proliferates. Professional illustrators face an unprecedented market disruption.

ChatGPT — Mass Adoption

OpenAI releases ChatGPT. One million users in five days. One hundred million in two months — the fastest consumer product adoption in recorded history. Google internally declares Code Red.

Proliferation

Google launches Bard → Gemini. Meta open-sources LLaMA. Anthropic releases Claude. Mistral emerges from Paris. The landscape fragments rapidly across dozens of competing foundation models.

Agents & Reasoning

The paradigm shifts from assistants to autonomous agents. Cursor writes code. Devin handles software engineering. OpenAI o1 implements chain-of-thought reasoning. DeepSeek demonstrates efficiency breakthroughs.

Open Horizon

Multimodal. Autonomous agents. Drug discovery. Scientific reasoning. Physical robotics. The AGI debate intensifies. Whether or not general intelligence arrives this decade, the economic transformation is already underway.

Agents Enter the Workforce

AI agents handle entire workflows — legal research, financial analysis, medical imaging, code review. White-collar disruption accelerates faster than policy can respond. The assistant era ends. The colleague era begins.

Multimodal Becomes Standard

Text, image, audio, video, and code collapse into unified models. AI that sees, hears, reads, and acts simultaneously becomes the baseline. The word "chatbot" is retired. Good riddance.

Scientific Discovery Accelerates

AlphaFold showed the way. The next wave extends into drug design, materials science, climate modelling, and fundamental physics. Discoveries arrive that we weren't specifically looking for.

Regulatory Frameworks Emerge

The EU AI Act is a beginning, not an end. Expect global divergence: EU tightens, US fragments by sector, China integrates AI into state infrastructure. An international body is proposed — and disputed.

Physical AI — Robots in Context

Foundation models extend into physical embodiment. Robots navigate, manipulate, and reason in unstructured environments. Warehouses, hospitals, construction. Physical labour re-prices again.

Personalised AI — One Per Human

Every person carries a persistent AI companion trained on their life: communication style, medical history, professional knowledge. Memory and continuity become design challenges more than technical ones.

AGI — The Contested Threshold

Whether a system reaches AGI depends entirely on definition. Narrow tasks: already surpassed. Common sense: rapidly closing. Flexible real-world agency at human level: the decade's open question.

The Unknown Unknowns

Every previous forecast missed the thing that actually happened. No one predicted GPT-3's emergent capabilities. No one predicted ChatGPT's adoption curve. The most significant development of the decade is probably not on any roadmap.

Proposed the Imitation Game in 1950 — the foundational question of machine intelligence. His theoretical work on computation underpins every AI system ever built.

Wikipedia

Organised the 1956 Dartmouth Conference and named the field. Invented Lisp — still influential today. Spent 50 years insisting AI was closer than it was.

Wikipedia

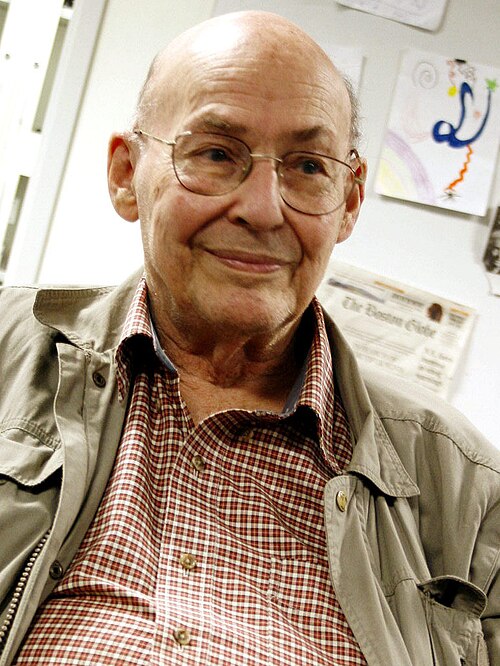

Co-founded MIT's AI Lab and shaped decades of AI research. Famously helped kill the first wave of neural networks with "Perceptrons" (1969) — and later regretted it.

Wikipedia

Proved deep neural networks work (2006). His student Krizhevsky built AlexNet (2012), triggering the modern AI era. Left Google in 2023 to warn about AI risk. Nobel Prize, 2024.

Wikipedia

Invented convolutional neural networks in the 1980s — the architecture behind every image recognition system. Chief AI Scientist at Meta. Vocal critic of AGI doom narratives.

Wikipedia

Shared the 2018 Turing Award with Hinton and LeCun for deep learning. Leads Mila — the Quebec AI Institute. Now among the most prominent voices for AI safety and regulation.

Wikipedia

Co-founded DeepMind in 2010; sold to Google in 2014. Led AlphaGo, AlphaFold, and Gemini. Nobel Prize in Chemistry 2024 for AlphaFold's protein structure breakthrough. Nobel Prize, 2024.

Wikipedia

Co-authored AlexNet, then co-founded OpenAI. Chief Scientist during GPT-2, GPT-3, GPT-4, and ChatGPT. Led the November 2023 board revolt against Altman — and lost. Founded Safe Superintelligence in 2024.

Wikipedia

Led Y Combinator before joining OpenAI as CEO. Oversaw GPT-3, DALL·E, and ChatGPT's historic launch. Fired and reinstated within five days in 2023. Now navigating OpenAI's transformation into a for-profit.

Wikipedia

Former VP of Research at OpenAI. Left with his sister Daniela in 2021 to found Anthropic — focused on AI safety and Constitutional AI. Creator of Claude.

Wikipedia

Built ImageNet — the dataset that made the 2012 deep learning revolution possible. Former Chief Scientist at Google Cloud. Now leads Stanford HAI and World Labs, focused on spatial intelligence.

Wikipedia

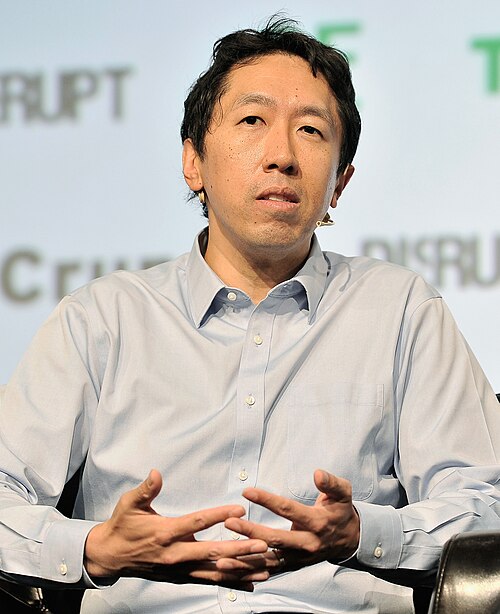

Co-founded Google Brain and Coursera. Former Chief Scientist at Baidu. His online courses have trained millions of AI practitioners. Consistently one of the most influential voices on AI's practical future.

WikipediaFrom Alan Turing's imitation game to autonomous agents — eight decades of machines learning to think, fail, and think again.